As artificial intelligence becomes increasingly embedded into the tech industry, the conversation is shifting from how we govern AI to a more fundamental question: who is responsible for ensuring it is inclusive?

While public policy often dominates discussions about AI, the most immediate and influential responsibility sits with companies and the wider tech community. These groups are already shaping what “inclusive AI” looks like in practice, long before any formal regulation catches up.

Let’s explore the various approaches businesses and tech communities can use to embrace this shift and help reduce bias risk.

AI Support for Fairer Hiring

For businesses, it’s essential to integrate inclusive AI practices for fairer hiring – helping to mitigate biases, promote diversity, and focus on candidate skills rather than demographic data. Key practices include:

- Bias-Free Job Descriptions: Using AI to flag and remove biased language from job postings so they can attract a wider range of candidates.

- Anonymous, Skills-First Screening: Applying AI to review resumes based only on skills and experience, removing personal identifiers to limit unconscious bias.

- Inclusive Training Data: Training AI models on diverse datasets to prevent reinforcing existing inequalities.

- Human Oversight: Keeping final hiring decisions people-driven, using AI as a support tool rather than the ultimate decision-maker.

This is followed by continuous auditing – which follows a 30/70 rule – regularly assessing AI algorithms for fairness and allowing AI to handle repetitive, operational tasks while humans handle the 70% involving people-first strategies.

Transparency as a Foundation for Trust

Transparency has become the foundation of inclusive AI. But it’s not just about publishing model cards or releasing high-level principles. It requires clear, open and consistent communication about how their AI systems work and what their limitations are.

The responsibility here is shared:

- Companies can create clarity by sharing how their models make decisions

- Teams can openly communicate data strengths, gaps, and considerations

- Communities can be welcomed into conversations to offer insights and perspectives

When people understand how AI works, they can identify blind spots, highlight cultural nuances, and flag unintended consequences.

This level of openness builds trust with clients, users, and internal teams. Transparency builds confidence, and confidence builds adoption.

Auditing Standards

As AI systems scale, auditing becomes essential as an ongoing commitment – meaning auditing must be continuous, collaborative, and interdisciplinary.

Responsibility is distributed across:

- Developers can help ensure fairness by assessing models before deployment

- Product teams can stay close to real-world impact and user experience

- Communities can offer lived experience insights that enrich and strengthen AI design

The most inclusive AI systems are those shaped by multiple perspectives, not just technical expertise.

People-First Approach

A people-first approach to AI governance shifts the focus from “What can we build?” to “Who are we responsible for protecting?”. Inclusive AI goes beyond representation – it’s about safeguarding people’s rights, autonomy, and ability to participate fairly in digital systems.

Companies embracing this mindset are beginning to embed principles such as:

- The right to clear explanations

- The right to question or challenge automated decisions

- The right to privacy and control over personal data

- The right to equitable treatment across all demographics

Ensuring these principles are upheld is a shared responsibility across every organisation building or deploying AI. It requires teams to understand not only how AI works technically, but also the ethical, social, and human impact of the systems they create.

Paving the way to an inclusive future

So, who is responsible for Inclusive AI?

The answer is simple: everyone involved in creating, deploying, or being impacted by AI.

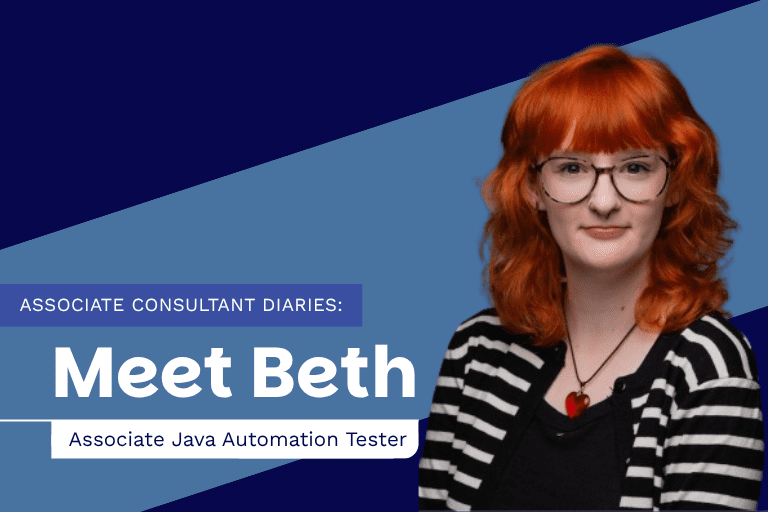

Inclusive AI isn’t the responsibility of a single department or role – it’s a shared commitment. And this year, we’re bringing this conversation to the forefront. By empowering organisations with the knowledge, skills, and frameworks needed for responsible AI, we strive to help businesses turn inclusion from an aspiration into a measurable, everyday practice.

And the conversation doesn’t stop here…

This year, we’ll be bringing together ED&I leaders, talent professionals and technical leaders to explore how organisations are embedding AI into recruitment practices, discussing:

- How to reduce bias in AI-driven screening toold to support fairer hiring

- Accountability between HR, tech, and vendors

- Governance and ethical guardrails

Stay tuned for more event details!

Looking to improve your hiring strategy?

Get in touch to find out how our expert team can help.